Already Exist Data in Ctl File Upload in Oracle Database

Dearest readers of our blog, we'd like to recommend yous to visit the main page of our website, where you tin learn about our product SQLS*Plus and its advantages.

SQLS*Plus - best SQL Server control line reporting and automation tool! SQLS*Plus is several orders of magnitude better than SQL Server sqlcmd and osql control line tools.

Enteros UpBeat offers a patented database performance management SaaS platform. It proactively identifies root causes of circuitous revenue-impacting database performance bug beyond a growing number of RDBMS, NoSQL, and deep/auto learning database platforms. Nosotros support Oracle, SQL Server, IBM DB2, MongoDB, Casandra, MySQL, Amazon Aurora, and other database systems.

two September 2020

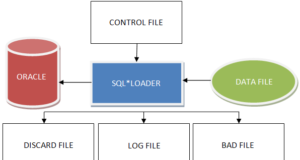

In this article, we will talk nearly how to upload information to an Oracle database, namely, nosotros will consider a utility to import data into an Oracle database chosen SQL*Loader.

Downloading and converting data

1 of the virtually typical tasks of an Oracle database ambassador (similar whatsoever other) is to download data from external sources. Although it is normally performed during the initial filling of the database, it is often necessary to load data into different tables throughout the existence of the product database.

Previously, database administrators used to perform data loading from flat files to Oracle database tables simply utility SQL*Loader.

Today, although the SQL*Loader utility still remains an important tool for loading data into Oracle databases, Oracle also offers another way to load tables, namely – recommends using the mechanism of external tables.

External tables utilize the functionality of SQL*Loader and allow y'all to perform circuitous transformations on them before loading data into the database. With their help, data tin can not but be loaded into the database only also uploaded to external files and then from these files to other Oracle databases.

In many cases, especially in information warehouses, the uploaded data needs to exist converted. Oracle offers several tools for performing data conversion within a database, including SQL and PL/SQL technologies. For example, the powerful MODEL design allows you lot to create circuitous multidimensional arrays and perform complex calculations between strings and arrays using uncomplicated SQL lawmaking.

In addition, Oracle offers a useful information replication mechanism called Oracle Streams, which allows you lot to transfer changes from 1 database to another. This machinery can be used for various purposes, including the maintenance of a backup database.

This affiliate deals with all these issues related to the loading and conversion of information. Just first, of grade, it provides a brief overview of what the extraction, conversion, and loading process are all about.

A brief overview of the procedure of extracting, transforming, and loading data

Before launching whatever application in relation to Oracle Database, it must first exist filled with data. I of the almost typical data sources for database filling is a ready of flat files from legacy systems or other sources.

Previously, the employ of a standard or direct method of loading data using SQL*Plus was the only possible way to execute the loading of these data from external files to database tables. From the technical betoken of view, SQL*Loader still remains the main utility supplied by Oracle to perform data uploading from external files, merely if y'all desire you tin can also use the machinery of external tables, which through the use of the SQL*Loader tool helps to access data contained in external data files.

Because the source data may comprise excessive information or data in a format other than that required by the application, information technology is oft necessary to convert it in some way before the database can use it. Conversion of data is a particularly mutual requirement for data warehouses that require information to be extracted from multiple sources. It is possible to perform a preliminary or bones conversion of source data when running the SQL*Loader utility itself.

However, complex information conversion requires separate steps, and there are several methods to manage this process. In most repositories, data goes through three main stages earlier it can exist analyzed: extraction, transformation, and loading, all chosen the ETL process. Below is a description of what each of the steps represents.

- Extraction is the process of identifying and extracting source data, perhaps in unlike formats, from multiple sources, non all of which may exist relational databases.

- Data conversion is the almost complex and time-consuming of these three processes and may involve applying complex rules to the data, as well as performing operations such every bit aggregation of data and applying dissimilar functions to them.

- Downloading is the process of data placement in database tables. Information technology may too imply the execution of index maintenance and table-level restrictions.

Previously, organizations used two different methods to perform the ETL process: the method to perform a conversion and then download, and the method to perform the download and then conversion. The kickoff method involves cleaning or transforming information before they are loaded into Oracle tables.

To perform this conversion, we usually employ individually developed ETL processes. As for the 2nd method, in near cases it does non accept full advantage of Oracle'southward built-in conversion capabilities; instead, information technology involves first loading data into intermediate tables and moving them to the concluding tables only afterward they have been converted within the database itself.

Intermediate tables play a key role in this method. Its disadvantage is that tables have to support several types of information, some of which are in their original state, while others are already in the gear up state.

Today, Oracle Database 11g offers simply stunning ETL capabilities, which allow y'all to load data into the database in a new way, namely, the method of performing their conversion during loading. Past using the Oracle Database to perform all stages of ETL, all usually time-consuming ETL processes can be performed fairly easily. Oracle provides a whole fix of auxiliary tools and technologies that reduce the time it takes to load data into the database, while likewise simplifying all related work. In particular, the ETL solution offered past Oracle includes the following components.

- External tables. External tables provide a way to combine loading and conversion processes. Their utilise will permit not to use cumbersome and time-consuming intermediate tables during data loading. They volition exist described in more than item later in this chapter, in the section "Using external tables to load data".

- Multitable inserts. The multitable inserts mechanism allows you to insert data non into one table merely into several tables at once using different criteria for different tables. Information technology eliminates the need to perform an extra pace such every bit dividing data into separate groups before loading them. Information technology will be discussed in more detail afterward in this affiliate, in the section "Using multiple tabular array inserts".

- Inserts and updates (upserts). This is a made-up name for a applied science that allows you to either insert data into a table or only update rows with a unmarried MERGE SQL statement. The MERGE operator will either insert new data or update rows if such data already exist in the table. Information technology tin make the loading process very easy because it eliminates the need to worry virtually whether the tabular array already contains such data. It volition exist explained in more detail later in this chapter, under "Using the MERGE Operator".

- Table functions. Tabular functions generate a gear up of rows every bit output. They return an instance of the collection type (i.e. a nested table or one of the VARRAY data types). They are similar to views, simply instead of defining the conversion process in a declarative way in SQL, they mean its definition in a procedural way in PL/SQL. Tabular functions are very helpful when performing large and circuitous conversions, as they allow you to perform them before loading information into the information warehouse. They volition be discussed in more than detail later in this chapter, in the section "Using table functions to convert data".

- Transportable tablespaces. These tablespaces provide an efficient and fast manner to move data from one database to some other. For example, they can exist used to easily and speedily drift data betwixt an OLTP database and the data warehouse. We will talk more most them in new blog articles.

Just for the record! You can as well use the Oracle Warehouse Builder (Oracle Data Storage Builder) tool or merely OWB to download data efficiently. This tool is a wizard-controlled tool for loading data into a database via SQL*Loader.

It allows loading information both from Oracle databases and from flat files. It too allows you to extract data from other databases such every bit Sybase, Informix, and Microsoft SQL Server through the Oracle Transparent Gateways machinery. It combines the functions for ETL and design in a very user-friendly format.

In the next section, you volition learn how y'all can utilise the SQL*Loader utility to download data from external files. It will also help y'all to understand how to utilise external tables to perform data uploading. Later describing the machinery of external tables, yous will learn nearly the diverse methods offered in Oracle Database 11g for data conversion.

Using SQL*Loader utility

The SQL*Loader utility, which comes with the Oracle database server, is oftentimes used by database administrators to load external data into Oracle databases. It is an extremely powerful tool and is able to perform non only information uploading from text files. Below is a brief list of its other features.

- It allows you to catechumen data earlier or right during the upload itself (however, in a express way).

- Information technology allows downloading data from several types of sources: from disks, tapes, and named channels, as well as using multiple data files in the aforementioned download session.

- It allows downloading data over the network.

- It allows y'all to selectively upload data from the input file based on different atmospheric condition.

- It allows loading both the whole table and only a certain function of it, as well as to load information into several tables simultaneously.

- It allows you to perform simultaneous operations on information loading.

- It allows you to automate the process of loading so that it would be carried out at a scheduled time.

- It allows you to load circuitous object-relational information.

SQL*Loader utility tin exist used to perform information loading in several modes.

- In conventional data loading mode. In this mode, SQL*Loader reads several lines at a time and saves them in a bound array, and then inserts the entire array into the database at in one case and fixes the functioning.

- In directly-path loading manner. In this mode, no INSERT SQL statement is used to load data into Oracle tables. Instead, cavalcade assortment structures are created from the data to exist loaded, which are then used to format Oracle blocks of data, afterward which they are written straight to the database tables.

- In the external data loading mode. The new proposed Oracle machinery of external tables is based on the use of SQL*Loader functionality and allows you to admission the information contained in external files as if they were part of the database tables. When using the ORACLE_LOADER access driver to create an external table, in fact, the functionality of SQL*Loader is used. Oracle Database 11g nonetheless offers a new access driver ORACLE_DATAPUMP, which allows you to write to external tables.

The first ii boot modes (or methods) have both their advantages and disadvantages. Due to the fact that the direct kicking mode bypasses Oracle'south proposed SQL mechanism, it is much faster than the normal kick mode.

However, in terms of opportunities for data conversion, the normal fashion is much superior to the direct mode, because it allows you to apply to the columns of the table during data loading a number of different functions. Therefore, Oracle recommends the use of the normal method of loading for loading small amounts of data, and straight – for loading big amounts of information.

Direct loading fashion will be considered in more than detail immediately after learning the bones functionality of SQL*Loader and ways to apply the usual method of loading. As for the external table fashion, it will be discussed in more than detail later in this chapter, in the department "Using external tables to load data".

The process of loading data using the SQL*Loader utility includes two main steps.

- Selecting a data file that contains the information to exist downloaded. Such a file normally has the extension .dat and contains the necessary data. These data can be in several formats.

- Create a command file. The control file specifies SQL*Loader how the data fields should exist placed in the Oracle table and whether the information should be converted in whatsoever style. Such a file usually has the .ctl extension.

The control file will provide a scheme for displaying the columns of the table on the information fields in the input file. The availability of a split up data file to perform the upload is not a mandatory requirement at all. If desired, the data tin be included in the control file itself, subsequently specifying the information, concerning the loading procedure control, like the list of fields, etc.

These data can be provided either in the grade of fields of stock-still length or in a gratuitous format with the apply of a special delimiter symbol, such equally a comma (,) or a conveyor (|). Since the control file is so important, permit us start with it.

Studying the SQL*Loader control file

The SQL*Loader control file is a simple text file that specifies important details of the loaded job, such every bit the location of the original data autumn, also every bit a scheme for displaying data in this source file on the columns in the target table.

Information technology may also specify whatsoever conversion operations to be performed during the upload procedure, as well as the names of the log files to be used for uploading, and the names of the files to exist used for intercepting wrong and rejected data. In general, the SQL*Loader control file provides instructions on the post-obit aspects:

- the source of the information to exist uploaded to the database;

- the specification of the columns in the target tabular array;

- the features of the formatting used in the input file;

- displaying the input file fields in the target table columns;

- data conversion rules (applying SQL functions);

- location of log files and error files.

List 13.1 gives an example of a typical SQL*Loader control file. The SQL*Loader utility considers the information rows independent in the source files to be recorded, and therefore the command file may all the same specify the format of the records.

Please annotation that a separate file for data is also allowed. In this example, even so, the data to be loaded follows the control information directly, by using the INFILE * specification in the command file. This specification indicates that the data to be loaded volition follow the control information.

At the performance of a single operation on the loading of the data, probably, it is better to act as easy as possible and place the information in the control file itself. The BEGINDATA keyword shows SQL*Loader, where the part of the command file containing the data begins.

LOAD DATA

INFILE *

BADFILE test.bad

DISCARDFILE test.dsc

INSERT

INTO TABLE tablename

FIELDS TERMINATED By ',' OPTIONALLY ENCLOSED By'"

(column1 POSITION (1:2) CHAR,

column2 POSITION (3:9) INTEGER EXTERNAL,

column3 POSITION (x:15) INTEGER EXTERNAL,

column4 POSITION (16:16) CHAR

)

BEGINDATA

AY3456789111111Y

/* Other data . . .*/

The part of the control file that describes the information fields is chosen the field listing. In the control file, shown in List thirteen.1, this field listing looks like this:

(column1 POSITION (1:two) char,

column2 POSITION (three:ix) integer external,

column3 POSITION (10:xv) integer external,

column4 POSITION (16:16) char

)

Hither you lot tin run into that the list of fields lists the names of fields, their position, data type, separators, and any permissible weather condition.

In the command file, you can specify many variables, which, roughly speaking, are divided into the following groups:

- constructions related to loading;

- constructions related to data files;

- constructions concerning the display of tables and fields;

- control-line parameters defined in the command file.

The post-obit subsections draw in more particular all of these dissimilar types of parameters that tin be fix in the control file to configure data loading processes.

Tip. If you lot are not sure which parameters to utilise for an SQL*Loader session, yous can simply enter them at the command line of the sqlldr operating arrangement to see all bachelor options. Running this command will brandish a list of all possible parameters and default values that are accepted for them in a item operating arrangement (if any).

LOAD Data keywords are at the very beginning of the control file and but hateful that you lot need to load data from the input file into Oracle tables using SQL*Loader.

The INTO TABLE blueprint indicates which table the data needs to exist loaded into. If you lot need to load information into several tables at once, you need to apply one INTO Tabular array construction for each tabular array. Keywords INSERT, REPLACE, and Suspend indicate to the database how the information should exist loaded.

If you apply an INSERT construct, the table must be empty, otherwise, the loading process will generate an fault or stop. The Supervene upon design tells Oracle to truncate the table and start downloading new information. When performing a boot operation using the REPLACE parameter, information technology will often appear as if the table hangs start. In fact, at this time Oracle truncates the table earlier starting the download process. Every bit for the Append blueprint, it tells Oracle to add new rows to existing table data.

Constructions concerning data files

There are several designs that can be used to specify the location and other characteristics of a file or data files from which data is to be loaded via SQL*Loader. The following subsections describe some of the most important of these constructions.

Specification of the data file

The proper name and location of the input file is specified using the INFILE parameter:

INFILE='/a01/app/oracle/oradata/load/consumer.dat'

If you lot do not want to apply the INFILE specification, y'all tin can include the data in the control file itself. If the data are included in the control file, instead of using a dissever input file, the identify of the file location is omitted and just the symbol * is specified in its place:

INFILE *

If you choose to include data into the control file itself, you must use the BEGINDATA structure before starting the data:

BEGINDATA

Nicholas Alapati,243 New Highway,Irving,TX,75078

. . .

Concrete and logical records

Each physical record in the source file is equivalent to a logical one by default, only if desired y'all tin can as well specify in the command file that ane logical record should include several physical records at once. For example, in the next input file, all three physical records shall as well be considered three logical records by default:

Nicholas Alapati,243 New Highway,Irving,TX,75078

Shannon Wilson,1234 Elm Street,Fort Worth,TX,98765

Nina Alapati,2629 Skinner Drive,Flower Mound,TX,75028

To catechumen these three physical records, you can employ either the CONCATENATE structure or the CONTINUEIF structure in the command file.

If the input data are in a fixed format, you can specify the number of lines to exist read to class each logical record as follows:

CONCATENATE iv

Specifically, this CONCATENATE design determines that one logical record should be obtained by combining 4 lines of data. If each data line consists of 80 characters, and so it turns out that the new logical tape to be created will contain 320 characters. For this reason, when using the CONCATENATE construction, the tape length structure (RECLEN) should also be defined together with it. In this case, this construction should look similar this:

RECLEN 320

As for the CONTINUEIF design, information technology allows combining physical records into logical ones past specifying i or more characters in a particular identify. For instance:

CONTINUEIF THIS (1:4) = 'next'

In this example, the CONTINUEIF design indicates that if 4 characters next are found at the start of a line, SQL*Loader should accept all subsequent data equally an extension of the previous line (four characters and the give-and-take side by side were chosen arbitrarily: any characters may act equally continuation pointers).

In example of using fixed format data, the CONTINUEIF character can be placed in the last cavalcade as shown in the following case:

CONTINUEIF LAST = '&'

Hither, the CONTINUEIF design determines that if an ampersand (&) character is found at the end of a line, the SQL*Loader utility should accept the next line as an extension of the previous ane.

Delight notation! Using both CONTINUEIF and CONCATENATE constructs volition slow down SQL*Loader, so it is still amend to display physical and logical records in a "1 to one" scheme. This is considering when combining multiple physical records to grade a single logical SQL * Loader requires boosted scanning of input data, which takes more time.

Tape Format

Y'all can apply one of the following three formats to your recordings.

- The stream format. This format is the near mutual and involves the use of a special ending symbol to bespeak the end of the recording. When scanning an input file, the SQL*Loader utility knows that information technology has reached the end of a record when information technology comes across such a completion graphic symbol. If no terminate grapheme is specified, the default end character is either the newline graphic symbol or the newline character (which in Windows must also be preceded by the carriage return character). In a set of 3 entries, which was given in the previous example, this is the format used.

- Variable format. This format implies an explicit indication of its length at the beginning of each tape, as shown in the following example:

INFILE 'example1.dat' "var ii"

06sammyy12johnson,1234

This line contains 2 entries: the first six characters long (Sammy) and the second twelve characters long (johnson,1234). The var two design indicates to SQL*Loader that all information records are of variable size and that each new tape is preceded by a two character long field.

- Fixed format. This format involves setting a specific fixed size for all records. Beneath is an example where it is specified that each record is 12 bytes long:

INFILE 'example1.dat' "ready 12".

sammyy,1234,johnso,1234

In this example, at starting time glance, it seems that the record includes the whole line (sammyy,1234, johnso,1234), just the design of prepare 12 indicates that in fact, this line contains two whole records of 12 characters. Therefore, it turns out that if a stock-still format is used in the source data file, it is allowed to take several entries on each line.

Descriptions apropos table and field comparing

During the loading session, SQL*Loader takes data fields from information records and converts them into table columns. It is in this procedure that the designs for mapping tables and fields help. With their assist, the control file provides details almost the fields, including column names, position, types of information contained in the input records, separators, and conversion parameters.

The column name in the table

Each column in the tabular array is conspicuously defined with the position and type of data associated with the value of the respective field in the input file. It is non necessary to load all columns in the tabular array with the values. If you skip any columns in the command file, the Nothing value is automatically set for them.

Position

The SQL*Loader utility needs to somehow find out where in the input file there are different fields. The fields are called individual elements in the data file, and in that location is no direct match between these fields and the columns in the table where the information is loaded. The procedure of displaying the fields in the input data file on the columns of the table in the database is called field setting and takes the nearly time from the CPU during loading. The exact position of the various fields in the data record allows yous to prepare the POSITION design. The position specified in this design can exist relative or accented.

Past specifying a relative position, nosotros mean the field position relative to the position of the previous field, equally shown in the following example:

POSITION(*) NUMBER EXTERNAL 6

employee_name POSITION(*) CHAR thirty

In this example, the POSITION blueprint instructs SQL*Loader to beginning load the showtime employee_id field then proceed to load the employee_name field, which starts at position 7 and is 30 characters long.

By specifying an absolute position is meant simply the position where each field begins and ends:

employee_id POSITION(ane:6) INTEGER EXTERNAL

employee_name POSITION(seven:36) CHAR

Data Types

The types of information used in the management file concern only the input records and those associated with the columns in the database tables do not match. The 4 main data types that can exist used in the SQL*Loader control file are listed below:

INTEGER(n) is a binary integer where n can be 1, 2, 4 or eight.

SMALLINT

CHAR

INTEGER EXTERNAL

FLOAT EXTERNAL

DECIMAL EXTERNAL

Separators

After specifying the data types, you can specify a delimiter, which should be used to divide fields. It can be defined using either the TERMINATED BY construction or the ENCLOSED BY construction.

The TERMINATED Past construction restricts the field to the specified symbol and indicates the end of the field. Examples are given beneath:

BY WHITESPACE

BY ","

In the first example, the pattern of TERMINATED BY indicates that the end of the field should be the outset character to come across the space character, and in the second instance, that a comma should be used to separate the fields.

The blueprint of ENCLOSED By " " indicates that a pair of double quotes should human activity as the field separating grapheme. Below is an case of how to employ this structure:

FIELDS TERMINATED By ',' OPTIONALLY ENCLOSED Past '"

Council. In Oracle, it is recommended to avoid using separators and to specify the field positions wherever possible (using the POSITION parameter). Specifying field positions saves the database the need to scan the data file and observe the selected delimiters there, thus reducing the fourth dimension spent on its processing.

Information conversion parameters

If you lot wish, you lot can make sure that before loading field data into table columns, SQL-functions are applied to them. Mostly, but SQL functions that render single values can exist used to convert field values. A field within an SQL string needs to be referenced using the field name_taxis.

The SQL office or functions themselves must be specified after the data type associated with the field and enclosed in double-quotes, as shown in the following examples:

field_name CHAR TERMINATED BY "," "SUBSTR(:field_name, 1, 10)".

employee_name POSITION 32-62 CHAR "UPPER(:ename)"

salary position 75 CHAR "TO_NUMBER(:sal, '$99,999.99')"

committee INTEGER EXTERNAL "":committee * 100"

As it is not hard to notice, the application of SQL-operations and functions to the values of fields earlier they are loaded into tables helps to convert data right during their loading.

Command-line parameters specified in the control file

The SQL*Loader utility allows you to specify the number of runtime parameters in the command line when calling its executable file. Parameters, the values of which should remain the same for all jobs, are normally set in a separate parameter file, allowing you to further employ the command line merely to run SQL*Loader jobs, both interactively and as scheduled batch jobs.

The command line specifies only those parameters that are specific to the execution time, forth with the name and location of the command file.

Alternatively, the runtime parameters can also be specified within the control file itself using the OPTIONS design. Yes, the runtime parameters can always be specified when calling SQL*Loader, but if they are often repeated, it is still better to specify them in the control file using the OPTIONS pattern.

Using the OPTIONS construct is especially convenient when the length of information to exist specified in the SQL*Loader command line is so large that information technology exceeds the maximum command-line size accepted in the operating organization.

For your information! Setting a parameter on the command line volition override the values that were specified for it in the control file.

The following subsections draw some of the virtually important parameters that can exist gear up in the control file by the OPTIONS structure.

Parameter USERID

The USERID parameter enables you to specify the name and password of the user in the database who has the privileges required for downloading data:

USERID = samalapati/sammyy1

CONTROL parameter

The Command parameter allows you lot to specify the name of the command file to be used for the SQL*Loader session. This control file may include the specifications of all loading parameters. Of course, information technology is too possible to load information using manually entered commands, but the use of the control file provides more flexibility and allows you to automate the loading process.

Command = '/test01/app/oracle/oradata/load/finance.ctl'

DATA parameter

The Information parameter allows you to specify the proper noun of the input data file, which should exist used to load data. By default, the name of such a file always ends with the .dat extension. Please annotation that the data to be loaded need not exist inside a carve up data file. If desired, they may too exist included in the control file, right after the details concerning the loading procedure itself.

DATA = '/test02/appacle/oradata/load/finance.dat'

BINDSIZE and ROWS parameters

The BINDSIZE and ROWS parameters let y'all to specify the size that the binding array should have in normal kick mode. When loading in normal mode, SQL*Loader utility does not insert data into the table row past row. Instead, it inserts an entire set up of rows into the table at one time; this ready of rows is called a bound array, and either the BINDSIZE or ROWS parameter is responsible for its size.

The BINDSIZE parameter specifies the binding array size in bytes. In the author's system, this size was 256 000 bytes by default.

BINDSIZE = 512000

As for the ROWS parameter, information technology does non impose whatsoever restrictions on the number of bytes in the binding assortment. Instead, it imposes a limit on the number of rows that can be contained in each bounden assortment; SQL*Loader multiplies this value in the ROWS parameter by the calculated size of each row in the table. In the writer'due south system, the number of rows in the ROWS parameter was 64 by default.

ROWS = 64000

Just for the record! When setting values for both BINDSIZE and ROWS, the SQL*Loader utility uses the lesser of these two values for the binding assortment.

The DIRECT parameter

If Straight is set up to truthful (DIRECT=true), the SQL*Loader utility uses the direct kick way rather than the normal one. The default value for this parameter is false (Direct=simulated), significant that the normal boot mode should be used by default.

The ERRORS parameter

The ERRORS parameter allows you to specify how many errors can occur before the SQL*Loader job must be completed. In most systems, this parameter is set to 50 past default. If you lot do not want to tolerate any errors, y'all can set this parameter to 0:

ERRORS = 0

LOAD parameter

With the LOAD parameter, you tin specify how many maximum logical records are allowed to exist loaded into the table. By default, you can load all records that are independent in the input data file.

LOAD = 10000

LOG parameter

The LOG parameter allows you lot to specify the name of the log file that the SQL*Loader utility should use during the boot process. The log file, which will be shown later, provides a lot of useful information about the SQL*Loader session.

LOG = '/u01/app/oracle/admin/finance/logs/financeload.log'

BAD parameter

The BAD parameter allows yous to specify the name and location of a bad file. In example some records are rejected due to information formatting errors, the SQL*Loader utility volition write all these records into the mentioned file. For example, the size of a field may exceed the length specified for it and, every bit a consequence, exist rejected by the SQL*Loader utility.

Notation that the records may be rejected non but by the SQL*Loader utility merely also past the database itself. For example, when trying to insert lines with duplicate values of master keys, the database will decline their insertion. Such entries shall likewise exist placed in the wrong entry file. If the name of the invalid record file is not explicitly specified, Oracle shall create such a file automatically and use the default proper name with the name of the direction file every bit the prefix.

BAD = '/u01/app/oracle/load/financeload.bad'

SILENT parameter

By default, SQL*Loader displays response messages on the screen and thus informs you lot about the loading process. You tin plow off the display of these messages using the SILENT parameter if y'all wish. Several values can exist set for this parameter. For example, y'all tin plow off the brandish of all types of messages past setting it to ALL:

SILENT = ALL

DISCARD and DISCARDMAX parameters

All records that are rejected at boot time because they exercise not meet the pick criteria specified in the direction file are placed in the discard file. By default, this file is not created. Oracle will create it only if there are rejected records, and even so but if it was explicitly specified in the control file. The DISCARD parameter, for example, is used to specify the proper noun and location of the rejected records file in the control file:

DISCARD = 'test01/appacle/oradata/load/finance.dsc'

By default, SQL*Loader does not impose whatever restrictions on the number of records; therefore, all logical records can be rejected. With the parameter DISCARDMAX, however, you can take and limit the number of rejected records.

Tip. All records are placed in their original format in both the wrong and rejected records files. This makes it easy, particularly when loading large amounts of information, to edit these files properly and use them to reload data that could not be downloaded during the initial upload session.

PARALLEL selection

The PARALLEL parameter allows you to specify whether SQL*Loader is immune to run several parallel sessions when loading in direct manner:

sqlldr USERID=salapati/sammyy1 CONTROL=load1.ctl Directly=true PARALLEL=true

Parameter RESUMABLE

With the RESUMABLE parameter, you tin enable the Resumable Space Resource allotment function offered past Oracle (resume functioning subsequently the problem of space allotment is eliminated). If this feature is enabled, when a infinite problem occurs at the time of loading, the task volition only exist paused, and the administrator volition and so be notified and will be able to allocate more space so that the task can proceed without issues.

The Resumable Space Allocation feature is described in more detail in Chapter 8. By default, RESUMABLE is set to false, which ways that Resumable Space Allocation is disabled. To enable it, simply set it to true (RESUMABLE=true).

The parameter RESUMABLE_NAME

The RESUMABLE_NAME parameter allows yous to specify a specific boot job that should exist renewable when using the Resumable Space Allocation function. By default, the value to be set for it is formed by combining the user proper name, session identifier, and instance identifier.

RESUMABLE_NAME = finance1_load

Parameter RESUMABLE_TIMEOUT

The RESUMABLE_TIMEOUT parameter can simply be set to truthful if the RESUMABLE parameter is set to true. Information technology allows you to define the timeout, i.e. the maximum time for which the operation can be postponed in instance of a collision with a infinite-related problem. If the problem cannot be solved inside this time, the operation volition be interrupted. Past default, this timeout is 7,200 seconds.

RESUMABLE_TIMEOUT = 3600

SKIP parameter

The SKIP parameter is very convenient to utilize in situations when SQL*Loader interrupts job execution due to some errors, but already has time to fix some lines. It allows you to skip a certain number of lines in the input file when executing SQL*Loader job for the 2nd fourth dimension. An alternative is to truncate the table and restart SQL*Loader job from the very commencement, which is not very convenient if a decent number of rows have already been loaded into the database tables.

SKIP = 235550

In this example, it is assumed that the first time the chore was interrupted afterwards the successful loading of 235 549 lines. This information tin exist obtained either past looking at the log file used during this upload session or by performing a query directly to the table itself.

Generation of information during download

SQL*Loader utility allows generating information for loading columns. This means that you lot tin can load data without using whatsoever information file. Most often, nonetheless, information is just generated for i or more columns when performing a general loading from a data file. Below is a listing of the data types that SQL*Loader tin generate.

Constant value. Using the CONSTANT design, yous tin can fix a column to a abiding value. For case, in the following example this construction indicates that all rows to be filled during a given session must accept sysadm value loaded_by in the column:

loaded_by CONSTANT "sysadm"

The value of the expression (value). With the EXPRESSION design, you can set up the column value of an SQL operation or a PL/SQL part every bit shown below:

column_name EXPRESSION "SQL string"

Datafile record number. Using the RECNUM structure, it is possible to set the column record number, which led to the loading of this row, as a value:

record_num RECNUM

Arrangement date. The sysdate variable can exist used to set the appointment of information downloads for a column as a value:

loaded_date sysdate

Sequence (sequence). With the SEQUENCE function you can generate unique values for column loading. In the following example, this function indicates that the electric current maximum loadseq sequence value should be used and that this value should be increased by one every time a row is inserted:

loadseq SEQUENCE(max,1)

Call SQL*Loader

In that location are several ways to call SQL*Plus utility. The standard syntax for calling SQL*Loader looks like this:

SQLLDR keyword=value [,keyword_word=value,...]

Beneath is an example of how to telephone call SQL*Loader:

$ sqlldr USERID=nicholas/nicholas1 Command=/u01/app/oracle/finance/finance.ctl \

Information=/u01/app/oracle/oradata/load/finance.dat \

LOG=/u01/aapp/oracle/finance/log/finance.log \

ERRORS=0 Straight=true SKIP=235550 RESUMABLE=true RESUMABLE_TIMEOUT=7200

Just for the record! When calling the SQL*Loader utility from the command line, the backslash grapheme (\) at the stop of each line means that the command continues on the side by side line. You tin can specify control-line parameters by specifying their names as well every bit their positions.

For example, the parameter responsible for username and password always follows the keyword sqlldr. If the parameter is skipped, Oracle will use the default value for this parameter. If you wish, you can add together a comma afterward each parameter.

It is not difficult to discover that the more than parameters you need to use, the more information you have to provide in the command line. This approach has two drawbacks. First, there is confusion when misprints or other errors are fabricated. Secondly, some operating systems may have a limit on the number of characters that tin be entered at the command line. Fortunately, the aforementioned task can as well be started with the following control, which is much less complicated:

$ sqlldr PARFILE=/u01/app/oracle/admin/finance/load/finance.par

PARFILE represents a parameter file, i.e. a file that can contain values for all command parameters. For example, for the load specifications shown in this chapter, this file looks like this:

USERID=nicholas/nicholas1

CONTROL='/u01/app/oracle/admin/finance/finance.ctl'

DATA='/app/oracle/oradata/load/finance.dat'

LOG='/u01/aapp/oracle/admin/finance/log/finance.log'

ERRORS=0

DIRECT=true

SKIP=235550

RESUMABLE=true

RESUMABLE_TIMEOUT=7200

Using the parameter file is a more elegant approach than entering all the parameters in the command line, and also more logical when you lot need to regularly perform tasks with the same parameters. Whatsoever option that is specified on the command line will override the value that was fix for that parameter within the parameter file.

If you desire to use the control line, but exclude the possibility of someone peeping at the password being entered, yous can call SQL*Loader in the following style:

$ sqlldr CONTROL=control.ctl

In this case, SQL*Loader will display a prompt to enter a username and password.

SQL*Loader log file

The log file of the SQL*Loader utility contains a lot of information about its work session. It tells you how many records should have been loaded and how many were actually loaded, as well as what records could not be loaded and why. In addition, information technology describes the columns that were specified for the fields in the SQL*Loader control file. List 13.ii gives an case of a typical SQL*Loader log file.

SQL*Loader: Release 11.one.0.0.0 - Production on Sun Aug 24 14:04:26 2008

Control File: /u01/app/oracle/admin/fnfactsp/load/exam.ctl

Data File: /u01/app/oracle/admin/fnfactsp/load/test.ctl

Bad File: /u01/app/oracle/admin/fnfactsp/load/exam.badl

Discard File: none specified

(Let all discards)

Number to load: ALL

Number to skip: 0

Errors immune: 0

Bind array: 64 rows, max of 65536 bytes

Continuation: none specified

Path used: Conventional

Table TBLSTAGE1, loaded when ACTIVITY_TYPE != 0X48(character 'H')

and ACTIVITY_TYPE != 0X54(character 'T')

Insert option in event for this table: APPEND

TRAILING NULLCOLS option in result

Cavalcade Name Position Len Term Encl Datatype

----------------------- -------- ----- ---- ---- ---------

COUNCIL_NUMBER FIRST * , Grapheme

COMPANY NEXT * , CHARACTER

ACTIVITY_TYPE Next * , CHARACTER

RECORD_NUMBER NEXT * , CHARACTER

FUND_NUMBER Adjacent * , CHARACTER

BASE_ACCOUNT_NMBER NEXT * , Graphic symbol

FUNCTIONAL_CODE Side by side * , Character

DEFERRED_STATUS Side by side * , CHARACTER

CLASS Side by side * , Graphic symbol

UPDATE_DATE SYSDATE

UPDATED_BY CONSTANT

Value is 'sysadm'

BATCH_LOADED_BY CONSTANT

Value is 'sysadm'

/* Discarded Records Section: Gives the complete listing of discarded

records, including reasons why they were discarded.*/

/* Rejected records department: contains a consummate list of rejected records

along with a description of the reasons why they were rejected.

Record 1: Discarded - failed all WHEN clauses.

Record 1527: Discarded - failed all WHEN clauses.

Table TBLSTAGE1:

/* Number of Rows: Gives the number of rows successfully loaded and the number of

The rows are not loaded due to or because they failed the WHEN conditions, if

whatsoever. Here, two records failed the WHEN status*/

/* Section number of rows: shows how many rows were successfully loaded

and how much was not loaded due to errors or failure to meet WHEN weather,

if any. */

1525 Rows successfully loaded.

0 Rows not loaded due to data.

two Rows not loaded considering all WHEN clauses were failed.

0 Rows non loaded because all fields were null.

/* Memory Department: Gives the bind assortment size chosen for the data load*/.

/* Memory partition: shows what size assortment was selected for data download*/.

Space for bind assortment: 99072 bytes(64 rows)

Read buffer bytes: 1048576

/* Logical Records Section: Gives the total records, number of rejected

and discarded records.*/

/* Boolean records section: shows how many total logical records

was skipped, read, rejected and rejected.

Total logical records skipped: 0

Total logical records read: 1527

Total logical records rejected: 0

Total logical records discarded: 2

/*Date Section: Gives the solar day and date of the data load.*/

/*Date section: Gives the date and date of the data load.*/

Run started on Lord's day Mar 06 fourteen:04:26 2009

Run ended on Lord's day Mar 06 14:04:27 2009

/*Fourth dimension department: Gives the time taken to complete the information load.*/

/*Fourth dimension section: Gives the time taken to complete the data load.*/

Elapsed time was: 00:00:01.01

CPU time was: 00:00:00:00.27

When studying the journal file, the primary attention should be paid to how many logical records were read and which records were missed, rejected, or rejected. In case yous encounter any difficulties while performing the task, the journal file is the offset place you should look to detect out whether the data records are beingness loaded or non.

Using exit codes

The log file records a lot of information almost the kicking process, merely Oracle also allows you to capture the go out code after each load. This arroyo provides an opportunity to check the results of loading when it is executed by a cron job or a shell script. If you lot use a Windows server to schedule kicking jobs, y'all can use the at command. The following are the central exit codes that tin can exist found on UNIX and Linux operating systems:

- EX_SUCC 0 means that all lines were loaded successfully;

- EX_FAIL ane indicates that some errors accept been detected in the command line or syntax;

- EX_WARN two ways that some or all lines have been rejected;

- EX_FTL iii indicates that some errors have occurred in the operating system.

Using the kicking method in straight mode

So far, the SQL*Loader utility has been considered in terms of normal boot fashion. Every bit we remember, the normal boot mode method involves using INSERT SQL statements to insert data into tables in the size of one bounden assortment at a time.

The method of loading in the directly way does not involve the utilise of SQL-operators to place data in tables, instead, it involves formatting the Oracle data blocks and writing them directly to database files. This directly writing process eliminates near of the overheads that occur when executing SQL statements to load tables.

Since the straight loading method does not involve a struggle for database resource, it will work much faster than the normal loading method. For loading large amounts of data, the directly mode of loading is the nigh suitable and perhaps the but effective method for the uncomplicated reason that the execution of loading in normal way will require more time than available.

In add-on to the obvious reward of reducing the loading time, the direct manner still allows you to rebuild the indexes and perform pre-sorting of data. In item, it has such advantages in comparison with the normal mode of downloading.

- Loading is much faster than in the normal mode of loading considering SQL-operators are not used.

- To perform data recording to the database, multi-block asynchronous I / O operations are used, so the recording is fast.

- There is an option to perform the pre-sorting of data using effective sorting subroutines.

- By setting UNRECOVERABLE to Y (UNRECOVERABLE=Y), it is possible to prevent any re-write data from happening during loading.

- By using the temporary storage mechanism, index building can be done more efficiently than by using the normal boot mode.

For your information! Normal kicking fashion will always generate rerun records, while direct boot fashion volition simply generate such records under certain weather. In addition, indirect mode, insertion triggers volition not be triggered, which in normal style are always triggered during the boot process. Finally, in contrast to the normal mode, the straight manner will exclude the possibility of users making whatsoever changes to the table to be downloaded with data.

Despite all the above, the method of performing the download in direct style too has some serious limitations. In item, it cannot be used under the following conditions:

- when using clustered tables;

- when loading information into parent and child tables simultaneously;

- when loading data into VARRAY or BFILE columns;

- when loading among heterogeneous platforms using Oracle Net;

- if you desire to use SQL-part during loading.

Simply for the record! Yous cannot use whatsoever SQL-functions in the direct boot mode. If you need to load large amounts of information and also convert them during the loading process, it tin can lead to problems.

Normal kicking mode will let y'all to use SQL functions to convert data, but it is very slow compared to directly mode. Therefore, to perform the loading of large amounts of data, it may be preferable to use newer technologies for loading and converting data, such as external tables or table functions.

Parameters that can be practical when using the download method in direct manner

Several parameters in SQL*Loader are specifically designed to be used with the direct mode kick method or more suitable for this method than the normal fashion boot method. These parameters are described below.

- DIRECT. If you desire to use the directly manner kicking method, y'all must prepare DIRECT to truthful (Straight=truthful).

- DATA_CACHE. The parameter DATA_CACHE is user-friendly to use in case of repeated loading of the same data or values of date and time (TIMESTAMP) during loading in the directly mode. The SQL*Loader utility has to catechumen date and fourth dimension data every fourth dimension it encounters them. Therefore, if at that place are indistinguishable date and time values in the downloaded data, setting DATA_CACHE will reduce the number of unnecessary operations to convert these values and thus reduce the processing time. Past default, the DATA_CACHE parameter allows saving 1000 values in the cache. If in that location are no duplicate appointment and time values in the data, or if at that place are very few, this parameter can be disabled at all by setting it to 0 (DATA_CACHE=0).

- ROWS. The ROWS parameter is important because it allows you lot to specify how many rows the SQL*Loader utility should read from the input data file before saving inserts to tables. It is used to define the upper limit of the amount of data lost in case of an case failure during a long SQL*Loader chore. Later reading the number of rows specified in this parameter, SQL*Loader will stop loading data until the contents of all data buffers are successfully written to the data files. This process is called information saving. For case, if SQL*Loader can load near 10,000 lines per minute, setting the ROWS parameter to 150,000 (ROWS=150000) volition cause data to exist saved every xv minutes.

- UNRECOVERABLE. The UNRECOVERABLE parameter minimizes the use of the rerun log during information loading in the straight mode (it is defined in the control file).

- SKIP_INDEX_MAINTENANCE. The SKIP_INDEX_MAINTENANCE parameter, when enabled (SKIP_INDEX_MAINTENANCE=true), tells SQL*Loader non to worry about index maintenance during loading. Information technology is prepare to false past default.

- SKIP_UNUSABLE_INDEXES. Setting it to true for SKIP_UNUSABLE_INDEXES volition ensure that SQL*Loader boots even tables whose indexes are in an unusable land. SQL*Loader will be serviced, even so, these indexes will non be used. The default value for this parameter depends on which value is selected for the SKIP_UNUSABLE_INDEXES initialization parameter, which is set to true by default.

- SORTED_INDEXES. The SORTED_INDEXES parameter notifies SQL*Loader that information has been sorted at the level of sure indices, which helps to speed upward the loading process.

- COLUMNARRAYROWS. This parameter allows you to specify how many lines should be loaded before edifice the thread buffer. For example, if you set it to 100 000 (COLUMNARRAYROWS=100000), 100 000 lines will be loaded first. Therefore, information technology turns out that the size of the array of columns during loading in the direct style will depend on the value of this parameter. The default value of this parameter was 5000 rows for the author on a UNIX server.

- STREAMSIZE. The STREAMSIZE parameter allows setting the period buffer size. For the author on a UNIX server, for example, this size was 256 000 lines past default; if you want to increment it, you could set the STREAMSIZE parameter, for example, STREAMSIZE=51200.

- MULTITHREADING. When the MULTITHREADING parameter is enabled, operations to convert column arrays to thread buffers and then load these thread buffers are executed in parallel. On machines with several CPUs this parameter is enabled by default (gear up to true). If you desire, you tin can turn it off by setting it to false (MULTITHREADING =false).

Control of restrictions and triggers when using the boot method in direct mode

The method of loading in the straight mode implies inserting data directly into data files by formatting data blocks. Since INSERT operators are not used, there is no systematic awarding of tabular array restrictions and triggers in the direct loading mode. Instead, all triggers are disabled, likewise every bit some integrity constraints.

The SQL*Loader utility automatically disables all external keys and check integrity restrictions, but it still supports not-zero, unique, and associated with master keys. When a task is finished, SQL*Loader automatically turns on all disabled constraints once again if the REENABLE design was specified. Otherwise, you will demand to enable them manually. As for triggers, they are always automatically re-enabled later the boot process has finished.

Tips for optimal utilize of SQL*Loader

To use SQL*Loader in an optimal mode, specially when loading large amounts of data and/or having multiple indexes and restrictions associated with tables in a database, information technology is recommended to do the post-obit.

- Try to utilise the boot method in direct style every bit frequently every bit possible. It works much faster than the boot method in normal mode.

- Employ it wherever possible (with direct boot mode), the UNRECOVERABLE=true pick. This will salvage a decent amount of time because new downloadable data will not need to be fixed in a rerun log file. The ability to perform media recovery still remains valid for all other database users, plus a new SQL*Loader session can e'er be started if a problem occurs.

- Minimize the utilize of the NULLIF and DEFAULTIF parameters. These constructs must always be tested on every line for which they are applied.

- Limit the number of data blazon and character set conversion operations because they slow down the processing.

- Wherever possible, use positions rather than separators for the fields. The SQL*Loader utility is much faster to move from field to field when their positions are provided.

- Display physical and logical records in a ane-to-one mode.

- Disable the restrictions before starting the boot process considering they will slow it downward. Of course, when you plow on the restrictions again sometimes errors may appear, but a much faster execution of the data loading is worth it, particularly in the case of big tables.

- Specify the SORTED_INDEXES design in case you use the straight loading method to optimize the speed of loading.

- Delete indexes associated with tables before starting the loading process in case of big information volumes being loaded. If it is incommunicable to remove indexes, you tin brand them unusable and use SKIP_UNUSABLE_INDEXES construction during loading, and SKIP_INDEX_MAINTENANCE construction during loading.

Some useful tricks for loading data using SQL*Loader

Using SQL*Loader is an effective approach, simply non without its share of tricks. This section describes how to perform some special types of operations while loading data.

Using WHEN construction during data upload operations

The WHEN construct can exist used during data upload operations to limit uploaded information to just those strings that run into certain conditions. For case, information technology can be used to select from a information file only those records that contain a field that meets specific criteria. Beneath is an instance demonstrating the application of the WHEN constructs in the SQL*Loader command file:

LOAD DATA

INFILE *

INTO Table stagetbl

Append

WHEN (activity_type <>'H') and (activity_type <>'T')

FIELDS TERMINATED By ','

TRAILING NULLCOLS

/* Here are the columns of the tabular array... */

BEGINDATA

/* Here comes the information...*/

Here, the condition in the WHEN construct specifies that all entries in which the field corresponding to the activity_type column in the stagetbl table practise not contain either H or T must be rejected.

Loading the username in the table

You can apply a pseudo-variable user to insert a username into a table during the kick process. Below is an case to illustrate how to use this variable. Annotation that the stagetb1 target table must necessarily incorporate a cavalcade named loaded_by in guild for the SQL*Loader utility to be able to insert the username into it.

LOAD DATA

INFILE *

INTO Table stagetbl

INSERT

(loaded_by "USER")

/* Hither are the columns of the table, and then the data itself...

Loading large data fields into a table

When trying to load into a tabular array whatsoever field larger than 255 bytes, fifty-fifty if the VARCHAR(2000) or CLOB type column is assigned, the SQL*Loader utility will not be able to load data, and therefore it will generate an error message Field in data file exceeds the maximum length.

To load a large field, it is necessary to specify in the control file the size of the corresponding column in the table when displaying the columns of the tabular array on the data fields, as shown in the following case (where the corresponding column has the name text):

LOAD Data

INFILE '/u01/app/oracle/oradata/load/testload.txt'

INSERT INTO TABLE test123

FIELDS TERMINATED Past ','

(text CHAR(2000))

Loading the sequence number in the table

Suppose there is a sequence named test_seq and it is required to increase its number when loading each new data record into the table. This behavior tin be ensured in the following way:

LOAD DATA

INFILE '/u01/app/oracle/oradata/load/testload.txt'

INSERT INTO Table test123

(test_seq.nextval, . .)

Loading data from a table into an ASCII file

Sometimes it is necessary to extract data from the database table into flat files, for example, to use them to upload data to Oracle tables located elsewhere. If you have a lot of such tables, you can write circuitous scripts, but if you are talking virtually merely a few tables, the following simple method of information extraction using SQL*Plus commands is also quite suitable:

SET TERMOUT OFF

Ready PAGESIZE 0

SET ECHO OFF

SET FEED OFF

Gear up Head OFF

Gear up LINESIZE 100

Cavalcade customer_id FORMAT 999,999

Column first_name FORMAT a15

COLUMN last_name FORMAT a25

SPOOL exam.txt

SELECT customer_id,first_name,last_name FROM client;

SPOOL OFF

You tin can also employ UTL_FILE package to upload data to text files.

Deletion of indexes before loading big information arrays

There are ii primary reasons why you should seriously consider deleting the indexes associated with a big table before loading data in direct mode using the NOLOGGING option. First, loading together with the indexes supplied with the table data may take more time. Secondly, if the indexes are left agile, changes in their construction during loading will generate redo records.

Tip. When choosing the pick to load data using the NOLOGGING option, a decent amount of redo records will exist generated to betoken the changes made to the indexes. In addition, some more redo information will exist generated to support the data dictionary, even during the data loading operation itself with option NOLOGGING. Therefore, the best strategy in this case is to delete the indexes and recreate them after creating the tables.

When loading in the directly mode, somewhere on the halfway point, an instance may crash, the space required by SQL*Loader utility to perform index update may run out, or duplicate index fundamental values may occur. All such situations are referred to equally the status of bringing the indexes to an unusable state considering after an example is restored, the indexes become unusable. To avoid these situations, it may also be better to create indexes after the boot procedure is finished.

Executing data loading in several tables

You can use the same SQL*Loader utility to load information into several tables. Here is an example of how to load information into two tables at once:

LOAD DATA

INFILE *

INSERT

INTO Table dept

WHEN recid = 1

(recid FILLER POSITION(i:1) INTEGER EXTERNAL,

POSITION(3:iv) INTEGER EXTERNAL,

dname POSITION(8:21) CHAR)

INTO TABLE emp

WHEN recid <> 1

(recid FILLER POSITION(i:one) INTEGER EXTERNAL,

POSITION(3:6) INTEGER EXTERNAL,

ename POSITION(8:17) CHAR,

POSITION(nineteen:twenty) INTEGER EXTERNAL)

In this example, data from the aforementioned data file is loaded simultaneously into two tables – dept and emp – based on whether the record field contains the value of 1 or not.

SQL*Loader fault code interception

Beneath is a simple example of how you can intercept SQL*Loader error codes:

$ sqlldr PARFILE=examination.par

retcode=$?

if [[retcode !=2 ]]].

then .

mv ${ImpDir}/${Fil} ${InvalidLoadDir}/.${Dstamp}.${Fil}

writeLog $func "Load Fault" "load error:${retcode} on file ${Fil}".

else

sqlplus / ___EOF

/* Hither you can place whatsoever SQL-operators for information processing,

that were uploaded successfully */

___EOF

Downloading XML data into Oracle XML database

The SQL*Loader utility supports using the XML information type for columns. If there is such a column, information technology tin therefore be used to load XML information into the table. SQL*Loader takes XML columns as CLOB (Graphic symbol Big Object) columns.

In improver, Oracle allows y'all to load XML information both from the primary data file and from an external LOB file (A big Object is a large object), and use both fixed-length and separator fields, as well equally read all the contents of the file into a single LOB field.

Tags: Oracle, Oracle Database, Oracle SQL

Source: https://www.sqlsplus.com/sqlloader-upload-to-oracle/

Belum ada Komentar untuk "Already Exist Data in Ctl File Upload in Oracle Database"

Posting Komentar